Occasionally, slow nodes would prolong the total execution time. It is difficult for a system of very large scale to complete long-running-time execution, because the system reliability becomes worse as the number of processing units increases .

We ran Ryser's algorithms with the number of nodes ranging from 2048 to 13 000, as shown in Table 1. The programs were tested under two types of configurations: running with only CPUs, or hybrid running with both CPUs and MICs. This supercomputer consists of 16 000 computing nodes, each containing three CPUs and two co-processors, denoted as MIC. We implemented Ryser's algorithm and BB/FG's algorithm (see the supplementary material for details), and ran them on the Tianhe-2 supercomputer. The two most efficient permanent-computing algorithms, Ryser's algorithm and BB/FG's algorithm, are both in the time complexity of O( n 2 The capacity of computing the permanent on Tianhe-2 is employed to benchmark the state of the art and set an upper bound on the classical execution time to be beaten by the quantum boson-sampling machine. To simulate the generation of a sample on Tianhe-2, it is necessary to compute the probability Pr, in which the main time-consuming task is to calculate the permanent of an n × n sub-matrix U S, T of U.

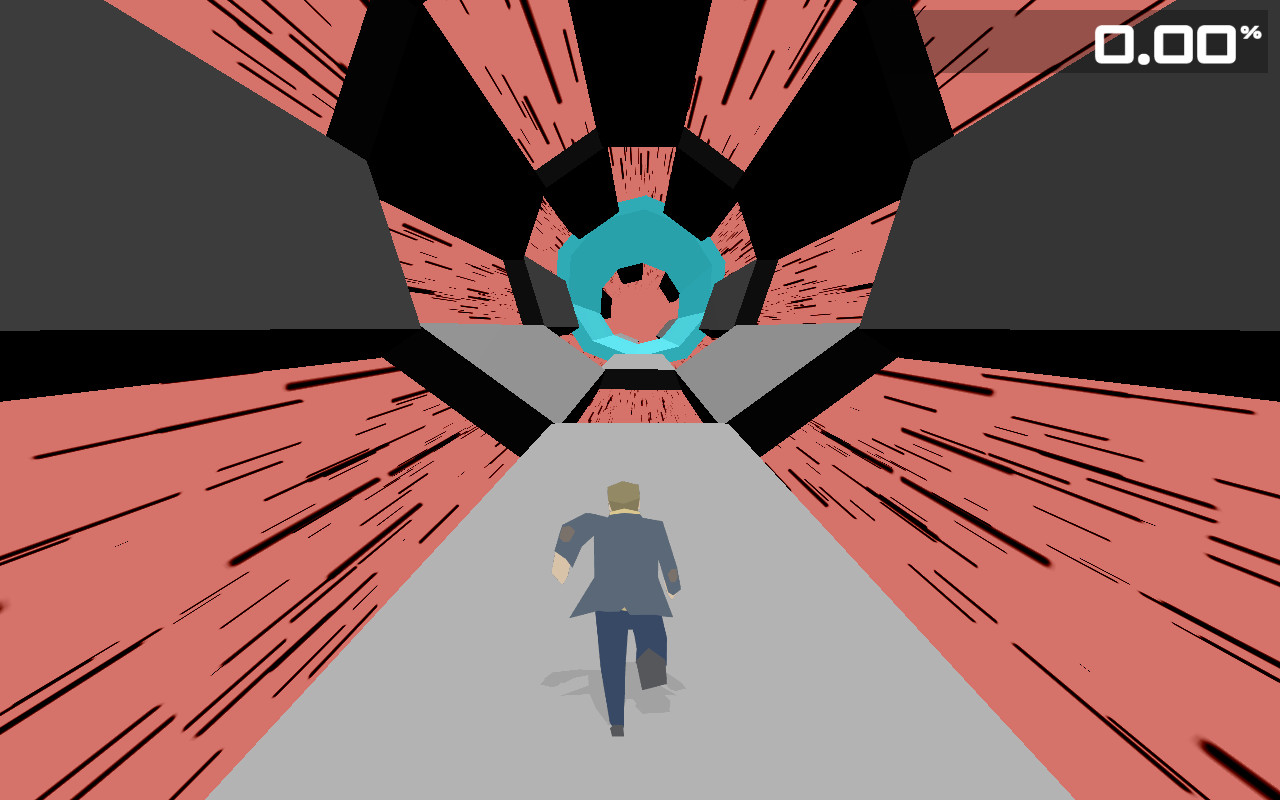

A quantum boson-sampling machine obtains an n-photon sample T directly through a measurement on the m output ports from the network that described by a unitary matrix U with input S. However, what is the capacity bound of a state-of-the-art classical computer for simulating boson sampling? This bound indicates the condition on which a quantum boson-sampling machine will surpass classical computers.Ī schematic view of the computational task with the Tianhe-2 supercomputer (a) and a quantum boson-sampling machine (b). This motivates massive advances in building larger quantum boson-sampling machines to outperform classical computers, including proof-of-principle experiments , simplified models that are easy to implement , implementation techniques, robustness of boson sampling , validation of large-scale boson sampling , varied models for other quantum states , etc. However, computing the permanent has been proved as a #P-hard task on classical computers . As for classical computers, the distribution can be obtained by computing permanents of matrices derived from the unitary transformation matrix of the network , in which the most time-consuming task for the simulation of boson sampling is the calculation of permanents. Unlike universal quantum computation, quantum boson sampling seems to be more straightforward, since it only requires identical bosons, linear evolution and measurement. It samples the distribution of bosons output from a complex interference network. This gap motivates research into purpose-specific quantum computation with quantum speedup and more favorable experimental conditions.īoson sampling is a specific quantum computation thought to be an outstanding candidate for beating the most powerful classical computer in the near term. This size, however, requires millions of qubits for a quantum computer to do the factorization , far from current technology . The key size crackable on classical computers is 768 bits .

For example, Shor's algorithm , which solves the integer factorization problem, is one of the most attractive quantum algorithms because of its potential to crack current mainstream RSA cryptosystems. However, building them has been experiencing challenges in practice, due to the stringent requirements of high-fidelity quantum gates and scalability. Universal quantum computers promise to substantially outperform classical computers . Boson sampling, permanent, quantum supremacy, quantum computing, supercomputer INTRODUCTION

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed